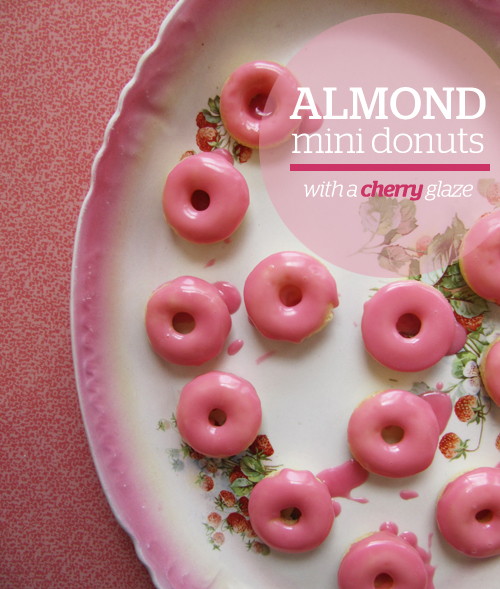

Because when I was seven I picked pink earrings over my birth stone. Because pink foods are always a party. Because cherries are the sole reason these are pink. Because when things are mini you can eat fifteen. Because I love you.

These guys are almond-y where the donuts are concerned and tartly cherry where the pink glaze is concerned. But don’t be concerned, these will make your heart smile.

Disclaimer: I blame the fact that I always spell “doughnuts” as “donuts” on the fact that I grew up with a Dunkin’ Donuts at the end of my street. I was known for going through the drive-thru for chocolate milk and a double chocolate donut like only a healthy teenager would.

Almond Mini Donuts + Cherry Glaze (makes 2 1/2 -3 dozen)

Donut recipe adapted from these guys

Ingredients:

donuts –

- 1 cup all purpose flour

- heaping 1/3 cup granulated sugar

- 1 t baking powder

- 1/4 t salt

- 6 T buttermilk

- 1/4 t almond extract

- 1 egg, lightly beaten

- 1 T butter, melted

- 1 1/2 cups powdered sugar

- 1 T+ cherry juice*

equipment –

- mini donut pan (or mini muffin pan)

* Simply press thawed sour cherries into a fine mesh strainer over powdered sugar.

Directions:

- Preheat oven to 425°F. Spray mini donut pan with nonstick cooking spray.

- In a large mixing bowl, sift together the flour, sugar, baking powder and salt. Add buttermilk, almond extract, egg, and butter and beat until just combined. (Transfer batter to a sandwich bag and snip off the end for easy donut pan filling.) Pipe donut batter into each donut cup approximately 1/2 full.

- Bake for 4-6 minutes or until the top of the donuts spring back when touched. Let cool in pan for 4-5 minutes before removing. Finish donuts with cherry glaze.

- Make glaze by whisking together powdered sugar and cherry juice until thin enough for dunking, but thick enough to do a good coat. Dunk the tops of the donuts in the glaze and set aside to set or pop in the fridge. Enjoy!

YAY! Click HERE for a printable pdf of the recipe above.

If you don’t have cherries use raspberries or blackberries!

OMG…!!! They are so cute and tempting. Cannot really express my feeling in words 🙂

Yay! Thanks Priyanka!

I’ve never made donuts (I spell them that way too, just cause it’s easier, haha) but cherry almond is such a great combo!

Oh, these are a breeze since they’re baked. And way to be! Donut spellers for life!

yay! cherry and almond are besties for real. these are so cute!

BFFs, yo! Thanks!

I’ve never made donuts before, but these seem like an easy way to break into the donut world, since they’re baked. I also love almonds and cherries (there’s nothing quite like the amazing smell of almond extract!)

Yeah man! Almond extract smells like a dream.

Oh yes….Dunkin’ Donut guy almost FELL out the window to see you..he missed you when you skipped a day! That was sooo funny..and thus instilled in you a passion for the the round food..in all colors! These are lovely!

He was my total BFF. 🙂

My AP Stylebook can bite me. Donuts are so much cuter than doughnuts. These pink little babies are too much!

Ha! Yeah! You tell that AP style what’s up, girlfriend.

That glaze is the prettiest and pinkest! I saw donut wrapping paper at a gift shop last weekend and I was seriously tempted. If it was MINI donut wrapping paper, I couldn’t have resisted 🙂

Thanks, lady! Donut wrapping paper sounds like the best wrapping paper ever! We should go to Papersource on our next lady date! They have a ton of awesome paper you’d love!

these are so cute and dainty! and i love that you used cherries for the pink glaze.

Dainty desserts are my fave!

“Because pink foods are always a party…” LOLZZZZ. Best one-liner ever. These donuts are beautiful.

haha! Thanks, Adrianna.

How sweet are these?! I love the color, I love that they’re mini, I love that you used cherries for the coloring… in short, I think I love everything about them. Can’t wait to try them!

Thanks! They are the most ladylike dainty little donut!

Beautiful!

Thanks, Sandi! They’re blushing!

Anything with almond extract in it is my fave. These look awesome!

Yeah girl!

Cherries and Almonds, my two favorite things in the world! LOVE THESE!

These were meant for you!

Oh my god these are so beautiful….looks so tempting. First time to your blog and you have a great site…happy to find your blog:)

Shucks! Thanks, Nina. 🙂

Hi, question, could I use Almond flour instead of wheat flour? I’m trying to lay off wheat…

I don’t think almond flour would work here. Sorry, Emma!

The directions say to add vanilla extract and vanilla seeds…but they are not listed in the ingredients list, neither is the wheat flour in question, am I missing something? Has anyone actually made these or do we all just think they look good?

Hey Cindy,

I made them! And I looked at the directions and fixed the directions to say almond extract instead of vanilla. There isn’t wheat flour anywhere in the ingredients/directions. Sorry about the confusion.